Current projects (briefly) – Click images to learn more!

Current projects (less briefly) – What’s so spatial about hearing? And what are we trying to learn?

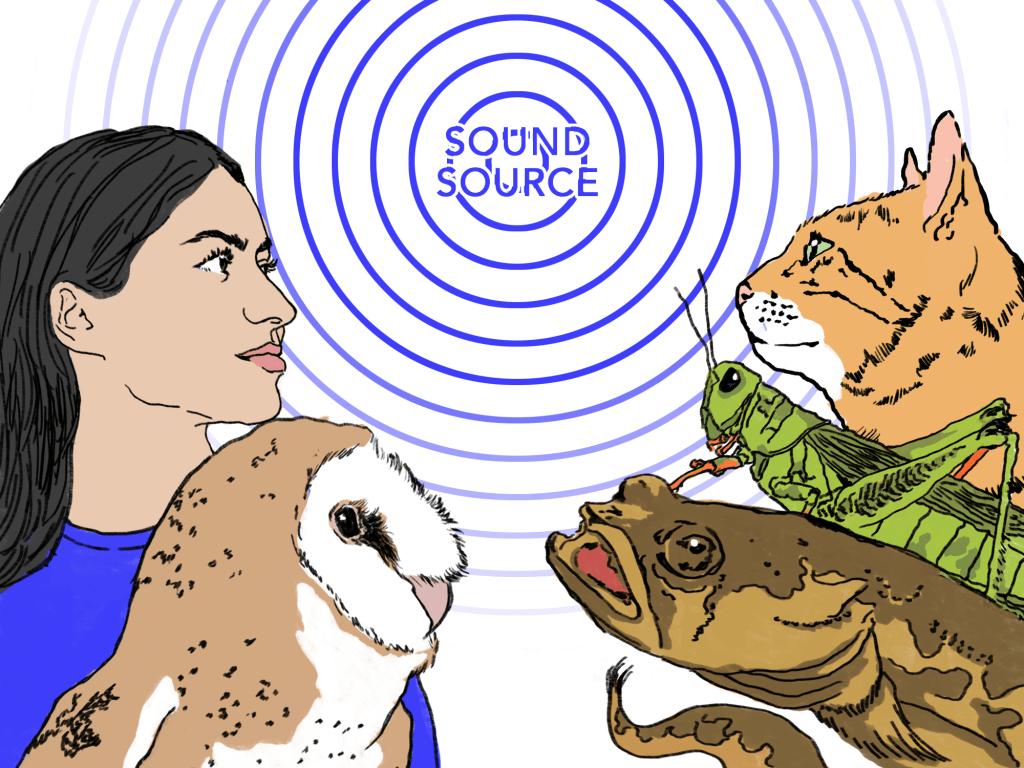

First, a bit of background. Our ability to tell where sounds are coming from – an ability shared by diverse organisms, from humans to cats to owls to minnows to fruit flies – depends on our remarkable sensitivity to subtle differences in the sounds reaching our two ears. And it comes so naturally that we can often take it for granted.

For example, imagine you are on a pleasant afternoon stroll through the woods, when you are jolted to attention by a snapping trail-side twig somewhere to your left. Another hiker? A woodland creature? A bear!? You reflexively whip your head to the side to find the source of surprise. But wait – how did you know which way to look?

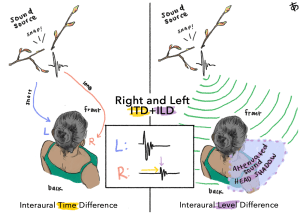

It’s actually quite simple: The sound from the twig reached your left ear before it reached your right because the sound’s path to your left was shorter. The sound was also a bit more intense at your left ear, mostly because the path to the right ear was blocked by your head.

These between-ear acoustic differences, known as interaural time differences and interaural level differences, reliably tell us whether a sound is to the left or right. They also enable us to separate simultaneous sound sources, even if those sources are all to the left or all the to the right, and even if they are very close together.

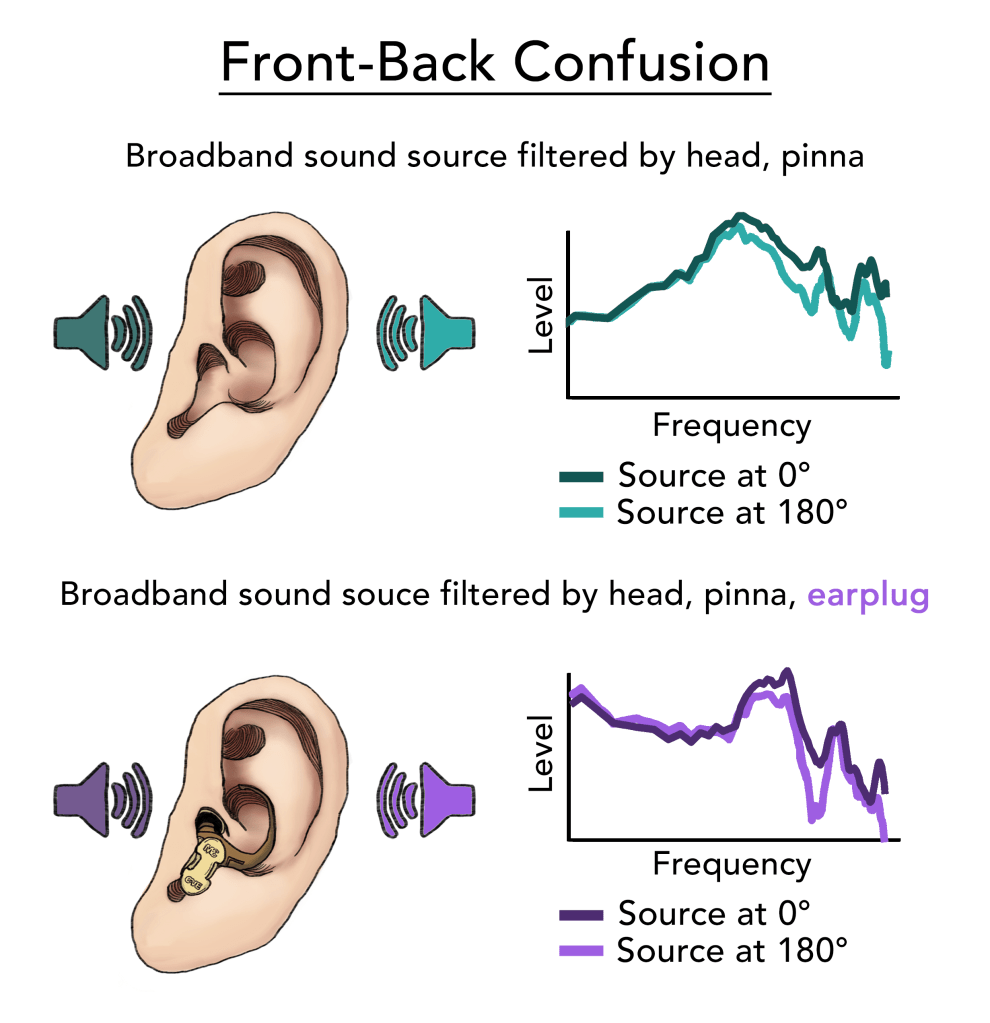

A somewhat more nuanced sound feature known as spectral shape provides a third bit of information that enables us to determine whether a sound source is in front or behind, above or below: In brief, the external ear or pinna (i.e., the cartilaginous flap on the side of your head – what anyone but a hearing scientist would call “the ear”) acts as an elaborate sound funnel, and the ridges and cavities of the external ear’s surface funnel sound differently depending on its direction of arrival. The resulting spectral shape cue can be critical for the avoidance of “front-back” and “up-down” confusions – in some settings, an essential matter of personal safety.

Determining the distance of the sound source turns out to be a more difficult problem, and our ability to do so depends significantly on the listening environment and other contextual cues. Compared to other auditory spatial capacities, our (human) auditory distance perception is not particularly good.

The early stages of the auditory brain include mechanisms for rapid and precise extraction of spatial acoustic cues carried by incoming sound, and under ideal conditions, most of us can discern the locations of sound sources separated by a few degrees or less (at typical source distances, inches apart!). Mere milliseconds after the sound reaches your ears, the angular location of the sound has been computed and relayed to higher centers of the brain which simultaneously receive information to determine the identity of the sound.

Complemented in no small way by the visual sense – you were probably hoping to see what snapped that twig, after all – the spatial hearing system is much more than a device to locate surprising sounds in the woods. Indeed, the brain continuously exploits spatial acoustic information to organize the “auditory scene” around you, enabling you to immerse yourself in the more pleasant sounds of your walk in the woods, to safely navigate a busy city street, or to converse with a new friend at a noisy party despite background banter.

We’ve had a reasonable grasp of the acoustic information involved in spatial hearing for a little over a hundred years, and a formal concept of the brain mechanisms involved for about fifty. Details of these brain mechanisms, the aspects of spatial hearing (perception) they control, and means of influencing (or recovering) their function in cases of impairment are the topics of active research in dozens of laboratories around the world. We maintain collaborative relationships with several of these laboratories, and are unaffiliated admirers of many others (you will find links to others’ lab websites on our Collaborators page). A few of the current projects in our own laboratory – which generally address matters of spatial hearing under non-ideal conditions – are summarized below (with a bit of technical detail you may prefer to read past).

Sound localization with hearing protection devices

Hearing protection devices (earplugs and earmuffs) can effectively mitigate the risk of noise-induced hearing loss for individuals who work in (or otherwise spend time in) noisy environments. However, despite decades of engineering innovation in hearing protector design (including the development of level-dependent hearing protectors that attenuate high-intensity sounds but leave low-intensity sounds audible), existing devices distort the spatial cues to sound location described above. Impacts on spectral shape cues are especially severe, leading to dramatic “front-back” and “up-down” errors. Such errors, which can misdirect the visual system and delay appropriate responses to critical signals, are unacceptable in high-risk settings (e.g., military and law enforcement settings). Personnel consequently may choose to forego hearing protector use. The long-term costs of this reality are profound, both monetarily and in terms of health and quality-of-life impacts (e.g., in the Veteran population). Ongoing work in our laboratory aims to increase the use and usability of hearing protection devices via two parallel projects:

(1) Quantification and prediction of spatial hearing performance afforded by existing hearing protectors. Hearing protection devices come in many shapes and sizes, with differing acoustic performance characteristics that may be better or worse for particular use cases. Although all hearing protectors studied to date reduce sound localization performance, we are working with collaborators at Applied Research Associates, Inc. and the University of Colorado to develop a system to identify the best device(s) (and avoid the worst) when spatial hearing / situational awareness is at a premium. A battery of tests is enabling us to quantify acoustic and resultant perceptual performance across a broad array of hearing protector designs. Performance data will be used to support informed selection of hearing protectors appropriate to intended applications, and to accelerate the design of new and improved hearing protectors.

(2) Improved localization during hearing protector use via perceptual training. Although your brain is used to your own ears, it can be trained to interpret modified spatial acoustic information resulting from changes in effective ear shape – including the changes produced by earplugs. Work in this vein seeks to identify the basis of improvement (what information is the brain re-learning?) and to expedite the rate and extent of improvement, toward an implementable training protocol.

This work has been supported by grants from the Department of Defense.

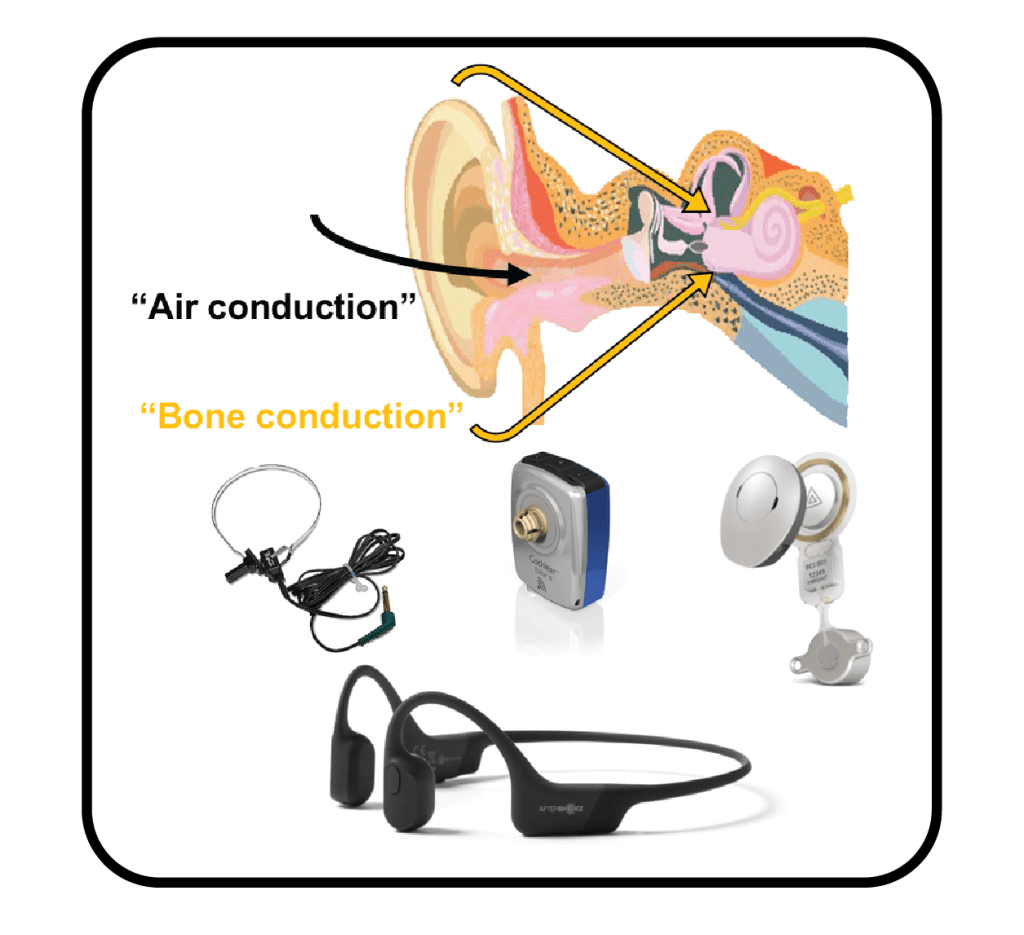

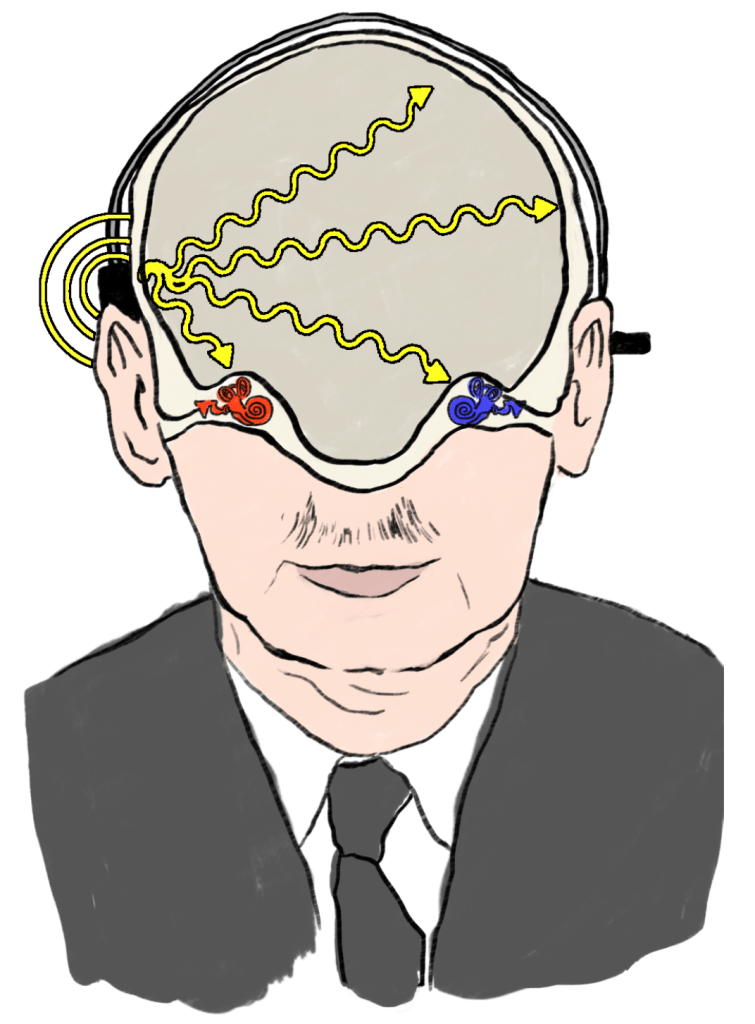

Understanding constraints on spatial hearing via bone conduction

In everyday hearing, sound reaches the inner ear (cochlea) primarily via conversion of airborne sound at the eardrum into a mechanical signal at the oval window, i.e. air conduction (AC) via the middle ear apparatus. A secondary mode of hearing, known as bone conduction (BC), bypasses the conductive pathway to cause pressure fluctuations within the cochlea via vibration of surrounding bone. We produce significant BC self-stimulation when we talk – one of the reasons our own voices sound different “on tape” – but the contributions of the BC pathway to the perception of externally generated sound are typically minimal, as airborne soundwaves are not efficiently transmitted into the comparatively dense bones and tissues of the head. However, BC signals are readily passed to the cochlea using bone-coupled mechanical transducers, and hearing tests employing such transducers have been used for over a century to diagnose conductive hearing pathologies.

Today, BC technology is routinely applied in both diagnostic and treatment settings. For individuals with conditions that disrupt the AC pathway to produce “conductive” hearing loss, BC is often the only viable pathway for acoustic stimulation of the affected ear(s). An increasing number of individuals with conductive hearing loss have thus received BC hearing aids, which efficiently stimulate the cochlea via a transducer coupled to the dense bone behind the pinna. BC hearing aids may also be indicated in cases of unilateral deafness, wherein a BC transducer can improve sensitivity to sound on the deaf side. In total, over 150,000 patients worldwide use some variety of BC hearing aid.

Traditionally, BC hearing aids were fit unilaterally (i.e., on one “ear” only) even in cases of bilateral hearing loss. Because the head is a continuous acoustic medium (in which both ears are embedded), BC stimulation at a single point on the head leads to stimulation of both ears. The level and timing of stimulation at each ear depends on the locus of the BC stimulation, and on individual anatomical differences. While it was once believed that the coupling of the two ears would prevent the transmission of useful interaural difference cues (ITDs and ILDs), a growing body of evidence suggests that two BC transducers are better than one (e.g., given bilateral conductive hearing loss, bilateral BC hearing aids provide better spatial hearing performance than a single BC hearing aid). Ongoing work in our lab seeks to identify the basis of such improvement, and to identify means to optimize the provision of interaural difference cues, toward better spatial hearing via BC in both clinical populations (users of BC hearing aids) and the general population (users of BC communications or personal audio devices).

This work is currently funded by a grant from the National Institutes of Health (NIDCD).

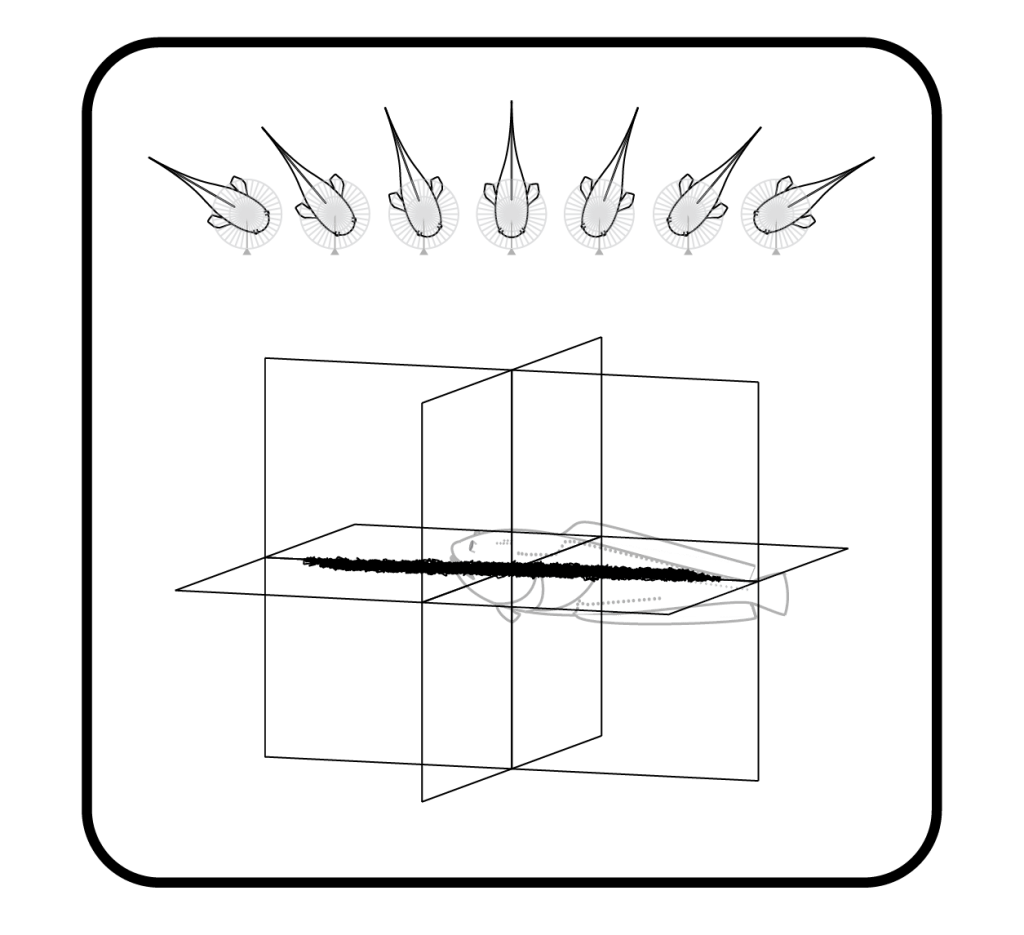

Comparative studies of directional hearing in fish

Fish comprise the largest group of extant vertebrates, over 30,000 species and counting (consider: there are only ~6000 extant mammalian species). Miles deep in the oceans, prolific in rainforest waterways, and hidden beneath polar ice – the diversity is impressive. But what do fish have to do with hearing science?

In broad strokes, the answer can be summarized in two parts:

(1) Fish have ears (similar to ours in some ways, very different in others). Some species use sound to communicate, and many are subject to fundamentally similar behavioral requirements – including the need to locate sources of sound. By learning how fish have “solved” similar problems under very different ecological constraints, novel solutions to unsolved hearing problems can be realized – a truth already evident in the design of hearing aid components inspired by ears of frogs and flies.

(2) Fish have hair cells fundamentally similar to those found in the human ear, occurring both in the fish inner ear organs and distributed across the body, comprising the lateral line system. Because fish and human hair cells are fundamentally similar, fish have become a popular model for studying the influences of drugs, noise, and other insults to hair cells that can cause hearing loss and deafness in humans. Exposure to such insults may happen artificially, e.g. in a dish in the lab, or “naturally” in the course of the animal’s interactions with its environment.

Several projects in this watery comparative world have developed over the past several years, fueled by collaborators including Joe Sisneros (Univerity of Washington), Alli Coffin (Washington State University), and Tyler Jurasin (Quinault Indian Nation). This work has been funded by the National Science Foundation and by smaller state/university grants.